Chapter 11

6 minutes

Lead qualification lessons

Our favorite way to learn about lead qualification is to talk to our peers about what's worked for them — and what to watch out for. Practitioners in sales, marketing, and RevOps from across our community have generously shared some obstacles overcome and lessons learned.

(ICYMI, we shared some of Clearbit's own speed bumps in The human in the lead score: what we've learned at Clearbit.)

🍎 Lead scoring models should start with a proper regression analysis on a substantial data set of customers. Sylvain Giuliani from Census shares how, at a previous company, an early model didn't work well at scale because it was tested on a small sample size.

We spent six weeks building a beautiful top-to-bottom scoring model. It did classic MQL scoring with company size, Alexa rank, etc., and used Clearbit Reveal to detect when target accounts were on our website.

Our biggest mistake was that we tested all of that with a small sample size (about 100) of existing accounts, to see if they fit our analysis. When we deployed all of the models, we quickly realized we hadn't accounted for fill rates for the values we were using. So, we ended up with tons of unqualified leads — and the ones that were qualified were the obvious ones you didn't need data to qualify.

The lesson: test with a bigger dataset. Always use data science for your model (regression analysis, etc.) instead of going by gut feeling and a small dataset in a spreadsheet.

🍎 How do you properly score leads that have been inactive for a while? Sylvain recommends you reset a lead's score after a long period of dormancy (he uses three months) and assign negative lead score points for shorter periods of inactivity, like two weeks.

In general, point-based lead scores are more effective when they incorporate negative values for low-intent behavior, low-fit attributes, and engagement time frame/frequency.

Here's another lead scoring mishap we had at that company: our incremental scoring model had no negative value and never reset.

We had a threshold score where if a lead hit 100 points, we'd route it to the sales team. You know, the classic: Visited pricing page 3 times in 1 week = +5 points; Has a good job title = +10 points; Used feature X = +10 points.

The problem was that people could take two years to do all that if they wanted. 🤦♂️ We had all of these old, slow-to-engage accounts that were routed to the sales team.

The lesson was to use negative signals, such as: Non-business email = -20 points; Hasn't logged into our product in 2 weeks = -10 points

And also to reset the score after a long period of inactivity (three months).

🍎 Jasmine Carnell at Lessonly by Seismic emphasizes the importance of testing any changes to communications. This is critical when relying on automated scoring, routing, and engagement systems, as process rules, approaches, and campaigns change.

Lessonly recently went on Summer Break. It was a great time for all employees to rest and recharge after such a long year during the pandemic. Leading up to this break, I worked with the head of our inbound team to manage an automated cadence for any new inbound leads.

Lesson learned: test, test, test! We thankfully avoided sending out hundreds of emails with unmapped fields because of our testing. With a week to make adjustments, every prospect heard back from us in a timely manner.

🍎 Test a lead scoring model with one or two sales reps first to prove that it gets results. Data helps align marketing and sales around lead scoring from the start, says Phil Gamache at WordPress.com, who shares an experience from a previous role.

The biggest mishaps I've had were trying to make lead scoring projects 'marketing-driven' or even 'coming from the top.' I'd shout from my marketing chair over to the head of sales: 'We need to change this, our process is wrong.'

No matter what kind of sales team you work with, these projects need to be collaborative from the start. The goal is alignment and it starts with common definitions and working towards shared objectives.

Start small. Get early proof that having sales focus on quality leads has better outcomes than letting them wild in the CRM to pick their own leads. Choose one or two reps on your team, test out your model and process with them, and gather data. Have them be your champions for implementing this across the rest of the team.

🍎 Jordan Woods at interviewing.io points out that fancy data points and segmentations don't necessarily improve a lead score. Always test new scoring attributes before deploying them so you don't overcomplicate things.

I once thought I was really smart because I championed the idea that layering technographic data into a lead score (e.g., a lead using expensive technologies) was a great way to suss out whether or not a company would be a good candidate for our product.

It turned out that it just limited the size of our target account list and performed no better than our typical employee range and industry criteria.

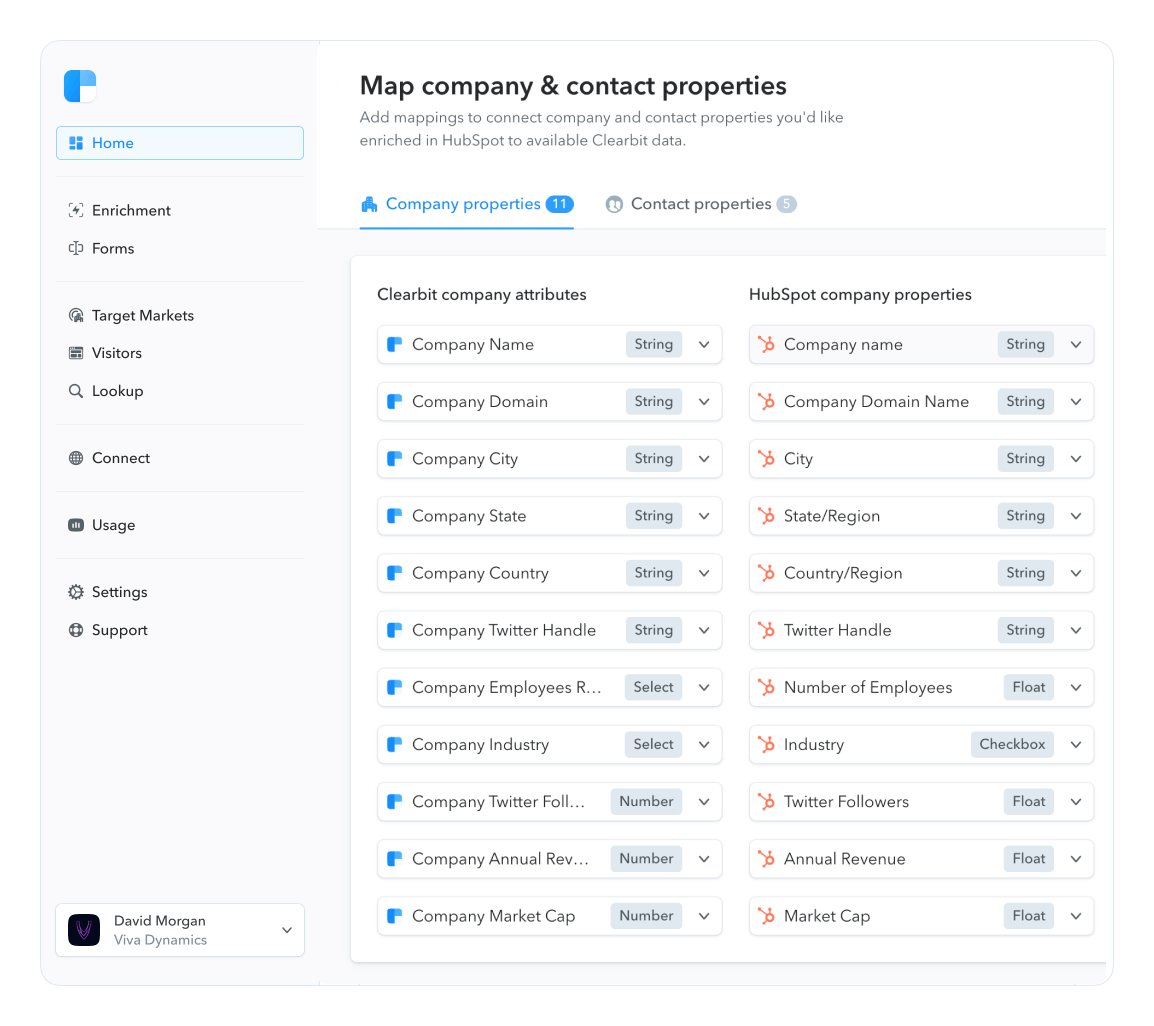

🍎 Don Otvos at LeanData shares how they keep data clean and actionable for sales reps.

When reps talk to a lead, we want them to work from all known data about that account, so we use lead-to-account matching to link every lead to the company they work for.

We avoid dirty data by tying enrichment to one identifier: web domains for account enrichment, and email addresses for individual lead enrichment. This makes it easier to identify duplicates and merge data.

We also take the time to do data cleanup on companies that have merged or been acquired, making sure everything aligns.

🍎 Loren Posendek at Wiz reflects on the benefits of having a direct line of communication between marketing and sales.

I've learned that close alignment with sales is crucial to the success and effectiveness of lead qualification and routing. Not only does this alignment strengthen the relationship between marketing and sales, but it allows the teams to openly provide feedback that drives the best results.

Like that one time earlier in my career that I mistakenly sent 700 event leads to the ADRs lead queues…oops! Because we had close alignment and an open line of communication with our team via Slack, we quickly pulled the leads back and adjusted the automated workflow template to prevent it from happening again.

We hope you're inspired to take a closer look at your company's qualification and routing systems, and encouraged by the fact that it's an iterative process.

You don't need to get it right on the first try. Hitting roadblocks and making mistakes is part of the deal, and every time you do, you'll learn a way to make your experience better for customers and reps alike.